diffusion-models

HERS: Hidden-Pattern Expert Learning for Risk-Specific Vehicle Damage Adaptation in Diffusion Models

HERS presents a domain-adaptive diffusion framework for controllable, realistic, and trustworthy vehicle damage synthesis. The method decomposes complex damage generation into a set of risk-specific expert modules, each specializing in a particular damage type such as dents, scratches, broken lights, or cracked paint, and trained using self-supervised image–text pairs without manual annotation. These experts are later integrated into a unified diffusion model that balances specialization with generalization, enabling precise control over damage attributes while maintaining visual coherence. Extensive experiments across multiple diffusion backbones demonstrate consistent improvements in text–image alignment and human preference over standard fine-tuning baselines. Beyond visual fidelity, HERS highlights broader implications for auditability, fraud prevention, and the responsible deployment of generative models in high-stakes domains, underscoring the need for trustworthy and risk-aware diffusion systems in applications such as automated insurance assessment.

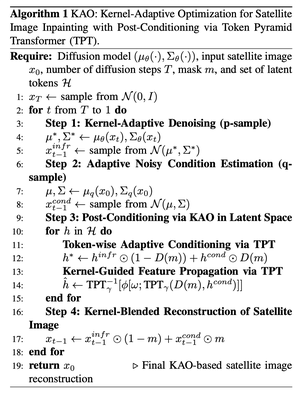

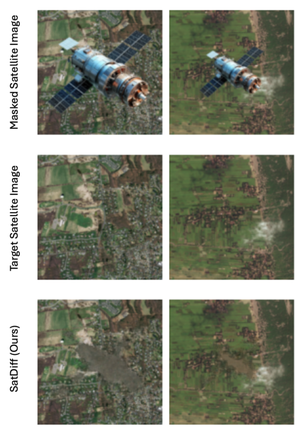

Satellite image inpainting is a critical task in remote sensing, requiring accurate restoration of missing or occluded regions for reliable image analysis. In this paper, we present SatDiff, an advanced inpainting framework based on diffusion models, specifically designed to tackle the challenges posed by very high-resolution (VHR) satellite datasets such as DeepGlobe and the Massachusetts Roads Dataset. Building on insights from our previous work, SatInPaint, we enhance the approach to achieve even higher recall and overall performance. SatDiff introduces a novel Latent Space Conditioning technique that leverages a compact latent space for efficient and precise inpainting. Additionally, we integrate Explicit Propagation into the diffusion process, enabling forward-backward fusion for improved stability and accuracy. Inspired by encoder-decoder architectures like the Segment Anything Model (SAM), SatDiff is seamlessly adaptable to diverse satellite imagery scenarios. By balancing the efficiency of preconditioned models with the flexibility of postconditioned approaches, SatDiff establishes a new benchmark in VHR satellite datasets, offering a scalable and high-performance solution for satellite image restoration. The code for SatDiff is publicly available at https://github.com/kaopanboonyuen/SatDiff.